I recently conducted a session on “Software Licensing: The Basic Concepts.”

Just after I mentioned the term, “license”, questions flooded in.

One of those was quite interesting, “How do we create a copyright license?”

I couldn’t get to it in time that day, so I thought, why not answer it here on the blog?

I am jotting down some best practices regarding choosing and declaring a license. Knowing something in theory and implementing it in real life are two completely different things.

Here I will be stressing real life scenarios.

These are the best possible ways of shouting out to the world,

“My project is licensed under this license.

If you want to use it please follow the rules made thereunder.”

Our discussion can be divided into two parts:

How to choose a license for your project?

Choosing a license is always difficult. It’s like choosing your favorite superhero character in the Avengers. You go and

- read different things out there,

- watch videos,

- ask friends (who you think, know more than you about licenses).

But the stuff above holds true with only 15% of the whole developer lot (I am being really conservative about the percentage).

Most of them choose a license,

- to end the frustration of reading the license document

- by picking the first one that appears in any list provided

- by following their friends’ choices

- by defaulting to the license, you first heard of,

- because you share similar values as the license project itself

The following are some basic ways of choosing a license in a proper manner:

1. Understand the License:

For choosing one amongst thirty different things, one has to comprehend the generic concept of what said thing is. When choosing between different laptops, one has to recognize what a laptop is.

So for choosing a license one has to know what license is (in general.)

License means permission.

It is a way of authorizing somebody to use something that you own, have a right to; your property. In the case of software licenses, we are dealing with Copyright. The license document specifies the terms, rules you have to follow to use someone else’s copyrighted software.

Let’s make life simple. Let us take a real life example of a driving license. What does a driving license do? It gives you the permission to drive a vehicle on the road. Similarly, a software license gives you the permission to do certain things with software. In the case of a driving license the motor vehicle authority gives you the permission, and for the software license, the owner of the software gives you permission. The motor vehicle authority is empowered by the govt to issue a driving license whereas in the software realm (just as with writing) the owner gets his authority under one of the legal rights governing intellectual realms called copyright. (I’m stretching the analogy a bit here, but it makes the picture clear).

2. Understand the License:

In our laptop example above, in order to choose the right laptop, you need to know the configuration that’ll suit you. Are you writer? A gamer? A coder? Watch movies? Knowing what you need is important.

The same theory holds with software licenses.

The first step to understand a license is to:

A. Read the license document carefully before you a choose one Go through the license document itself. Yes, I know! It’s big, it’s boring and legal. But once you understand it, you are sorted for quite a long time.

Reading the license document will give you a clear idea of -

i. The rights you are actually licensing.

ii. What the license requires of you. Each license stipulates certain conditions. It requires you to do certain things; to follow certain steps, for them to be applicable. Let’s take the MIT license as an example. There are many packages are marked as being under the MIT license in their meta data, but don't actually include a copy of the license text with their code. In fact, the MIT license specifically states that you need to include the permission notice in all copies or substantial portions of the Software. Therefore in this instance, the terms of the MIT License don’t actually apply to the software, though it was intended to be.

B. License matching the use case Choose a license matching the use case of your software. A license that accomplishes the goal of your software. A license actually shows your aim, your intention, and what you want to do with the software to the users; so be very careful when choosing one.

C. Spent some time choosing the license

Your license is a very important document. It will decide the future of your project. Therefore, once again, be very careful while choosing it.

Do not hurry. Choosing a license is similar to investing in mutual funds. Do your homework and be intentional and sure in the choosing now, than be sorry in the future.

3. There are some basic points one may keep in mind to choose the license:

A. When freedom is important If you care about freedom and sharing improvements to the community and society, then the GNU GPL is the license for you. In the case of the GPL, you need to distribute the original source code along with the modifications you have made.

B. Patent Concerns When Patent is your primary concern, then the Apache and similar licenses which have the “Grant of Patent” is your solution.

“Grant of Patent” ensures that the end user can use the software without any legal threats related to patents.

Let’s try to understand this with an example. A company has a patent on a particular software algorithm. Then that same company releases an open source library that implements said algorithm. If it is released under a license that does not include a patent grant, it (the company itself) could sue people using their (open source) software under patent law.

By licensing the code under a license that includes a patent grant, a company is saying “We’re not going to sue you for using any patents related to the software which we've made open source.”

C. Permissive nature: If you want something that anyone can use, for any purpose, even proprietary usage, then you might want to opt for licenses permissive in nature, such as the MIT & the BSD licenses.

4. And you are confused now? Where do I go? What to choose from? What are my options?

Here are a few solutions:

A. Free Software Foundation: It maintains a well-drafted FAQ answering all your queries regarding its free software licenses. Before choosing a license you might want to have a look at it

B. OSI OSI the open source initiative has a list of the open source licenses and guidelines to do the same.

C. choosealicense.com This handy website by GitHub actually walks you through most requirements and suggests an apt license

D. Available licenses Looking for more options? You can have look at the licensing wiki of Fedora project. It is a really nice page maintained by Tom Spot Callaway.

E. Choose a popular license If you are confused that what license to choose, go for those ones which are popularly used by the community (more specifically, by the people you want to work with.) To make your project widely used one should choose a popular license. It will be easier for the community to use your software without being worried about compatibility issues.

5. Cautionary statement:

1. NO license invention Please do not invent your own license. There are plenty of nicely drafted licenses meeting all your requirements. Trust the legal experts; they know the law.

2. Keep your funny bones aside

If you ignore all the caution and try to draft your own license try not to have clauses like "Buy me a beer" or "Don't be evil" (please keep your funny bones to yourselves). Not that I have an objection if the developer/user treats you to a bear nor am I a great supporter of evil.

It’s just that the legal implications of these are different.

If you are confused about what licenses to choose, you may look into various lists provided by the different websites above.

How do I create a license?

To me, that’s just another way to ask the same thing above.

Remember - “Don’t Invent Your Own License!”

What you actually want to know, is how to let the world know what license your project actually uses.

Here are some basic steps:

1. Create a LICENSE file

Create a LICENSE file, generally a plain text file. The file should have the name of the license as well as the full license text of the license document.

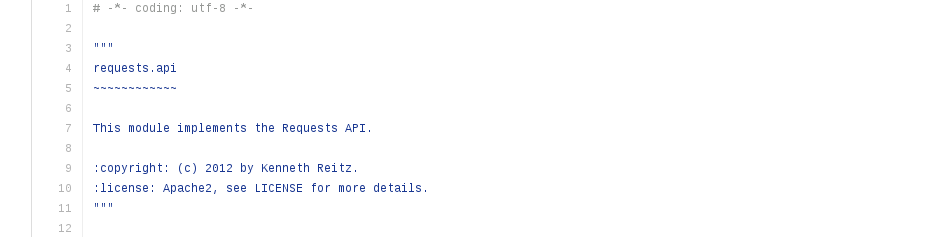

2. Add a copyright header

Add a copyright header to each significant source code file. By significant, I mean the volume and importance both. And modify it from time to time, as you make new releases.  In the above example, you can see that the name of the author and the license, the year has been mentioned.

In the above example, you can see that the name of the author and the license, the year has been mentioned.

3. Mention the license in the setup.py (in the case of a Python project)

The name of the license should be mentioned in the setup function within setup.py

4. License in a README file

If you have a README or equivalent introductory file, containing all the basic information of the project. State the name of the license there and refer the reader to the LICENSE file.

I have tried to provide you with a small, tiny mini, checklist so that it is helpful for you while you choose a license for your project.

Go forth & License!

Photo Credit:

Photo Credit: